Introduction to Server Motherboards Architecture

Server motherboards form the foundation of enterprise computing infrastructure, acting as the central hub that connects and coordinates critical system components such as CPUs, memory modules, expansion slots, and high-speed interconnects. Unlike desktop motherboards, server motherboards are specifically engineered for:

-

Reliability – continuous operation in mission-critical environments

-

Scalability – support for multiple CPUs, memory channels, and expansion cards

-

Data center performance – optimized for 24/7 workloads under demanding conditions

Evolution of Server Motherboards

Modern server motherboard design has advanced rapidly with the integration of next-generation technologies such as:

-

PCIe 5.0 and 6.0 – delivering higher bandwidth for GPUs and accelerators

-

DDR5 memory interfaces – enabling increased density and speed

-

CXL (Compute Express Link) – supporting coherent memory sharing across CPUs and accelerators

These innovations drive the need for High Density Interconnect (HDI) PCB design, ensuring that dense routing can be achieved while maintaining signal integrity and efficient power delivery.

Engineering and Design Challenges

The complexity of today’s server motherboards extends beyond conventional PCB challenges. Engineers must carefully address:

-

Material selection – balancing cost, performance, and thermal stability

-

Impedance control – essential for high-speed differential pairs

-

Thermal management – ensuring efficient heat dissipation across CPUs and GPUs

-

Manufacturing process optimization – achieving high yield with advanced HDI stack-ups

Ultimately, server motherboard designers must balance performance, cost efficiency, and compliance with enterprise reliability standards.

Server Motherboard Design Fundamentals

Core Components and PCB Layout in Server Motherboards

The architecture of server motherboards integrates multiple critical subsystems that must be carefully arranged within the PCB layout. The key components include:

- CPU sockets – designed for enterprise-grade processors with high core counts

- Memory slots – supporting DDR4 and DDR5 configurations with ECC capability

- Chipset components – responsible for I/O management and system control

- Expansion slots – PCIe Gen5/6 connectors and specialized accelerators such as GPUs or storage controllers

The physical layout of server motherboards follows strict principles to optimize signal routing and reduce electromagnetic interference (EMI):

- CPU sockets are typically placed at the center of the board to minimize trace length.

- Memory slots are positioned symmetrically to ensure matched impedance and skew control.

- Chipsets and controllers are strategically located to balance data flow and thermal management requirements.

In addition, power delivery networks (PDNs) are critical in server motherboard design, requiring:

- Multiple voltage rails with precise regulation

- Low-noise distribution across high-current loads

- The ability to handle dynamic load variations while maintaining system stability

Signal Integrity and High-Speed Design in Server Motherboards

Modern server motherboards must achieve outstanding signal integrity to support high-speed digital interfaces exceeding 28 Gbps. This necessitates advanced PCB design techniques, including:

- Controlled impedance routing

- Differential pair design with strict spacing rules

- Optimized via structures to reduce stubs and reflections

To ensure reliable data transmission, engineers must address:

- Crosstalk mitigation through proper trace separation and shielding

- Skew control to maintain synchronization between differential signals

- Return path continuity to guarantee stable reference planes

Server Motherboard Stack-up Design Considerations

Layer stack-up plays a pivotal role in server motherboard design because multiple high-speed interfaces coexist within the same PCB. A well-optimized server motherboard stack-up design ensures:

- Adequate isolation between signal, power, and ground layers

- Balanced copper distribution for thermal and mechanical stability

- Reduced manufacturing complexity while maintaining cost efficiency

Advanced HDI PCB Design for Server Motherboards

HDI Adoption Criteria in Server Motherboards

The adoption of HDI PCB design for server motherboards is driven by technical requirements that cannot be met using conventional PCB technologies. Key factors include:

- Fine-pitch packages – CPU sockets, chipsets, and BMC components now feature BGAs at 0.5 mm pitch or finer, with advanced designs requiring 0.3–0.4 mm pitch and VIPPO (Via-In-Pad Plated Over) solutions.

- High-speed fabrics – PCIe Gen5/6, CXL, and NVLink interfaces demand short vertical transitions and minimal stub lengths to maintain signal integrity above 32 Gbps.

- DDR5 memory topologies – RDIMM/LRDIMM configurations require tightly controlled impedance and skew management achievable only through HDI stack-up architectures.

- I/O escape density – Around CPUs and GPUs, routing pressure drives the use of microvias, avoiding excessive layer counts or costly back-drilling.

- Mechanical constraints – Form factors such as EEB, CEB, and custom server card outlines limit available routing channels, making HDI indispensable.

Server Motherboard Stack-up Design Considerations

Translating design requirements into an HDI server motherboard stack-up involves systematic analysis of density, signal integrity, and manufacturability. Important guidelines include:

- BGA pitch and I/O density directly determine HDI order selection and the number of outer microvia layers needed for escape routing.

- High-speed interconnects require outer-layer microvias to reduce stub length.

- DDR5 skew control benefits from spread-glass material selection and dielectric thickness optimization.

- Power delivery and thermal needs influence copper weight distribution and via-farm placement.

Stack-up design methodology for server motherboards typically involves:

- Analyzing BGA escape patterns around CPUs and memory.

- Determining the minimum HDI order required for clean routing, preferably using staggered microvias.

- Selecting a consistent dielectric material family for stable electrical performance.

- Back-solving impedance targets to finalize trace geometries and plating allowances.

Microvia Design and VIPPO in HDI Server Motherboards

Microvia and VIPPO structures are central to HDI server motherboard design, where performance and manufacturability must be balanced:

- Laser microvias – typically 75–100 µm diameter, with aspect ratios ≤ 1:1 for plating reliability.

- VIPPO implementation – requires controlled fill materials, planarity specifications, and optimized over-plating thickness to support fine-pitch BGAs at 0.3–0.4 mm pitch.

- Staggered vs. stacked microvias – staggered designs are preferred for higher yield and lower cost; stacked vias, if unavoidable, should be limited to two pairs per side, with attention to copper balance and lamination stress.

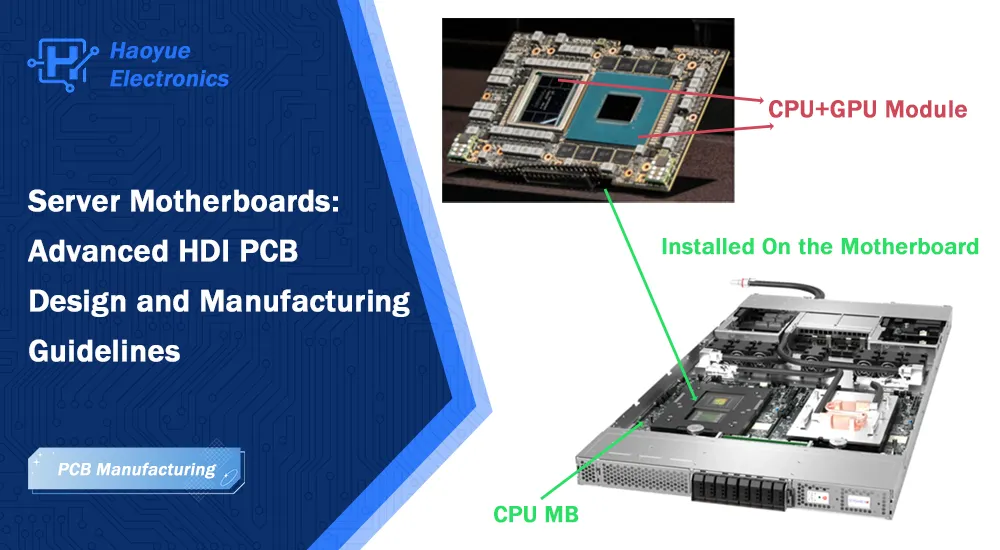

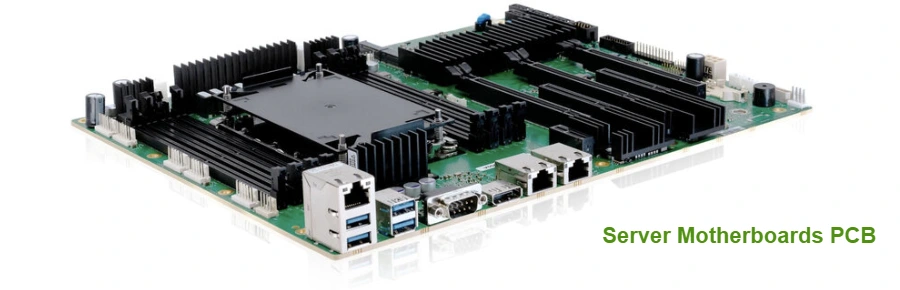

Server Motherboards PCB

Server Motherboard Types and Applications

Enterprise Server Motherboards Classifications

Enterprise server motherboards are designed to meet diverse workload requirements. They can be classified into several common categories:

-

Dual CPU Server Motherboards

-

Most widely used in enterprise environments

-

Feature two CPU sockets with integrated memory controllers

-

Support symmetric multiprocessing (SMP) for balanced processing power and memory bandwidth

-

Ideal for general-purpose servers, virtualization, and database workloads

-

-

Single CPU Server Motherboards

-

Employed in specialized applications prioritizing power efficiency or processing density

-

May include additional expansion slots or specialized interfaces (e.g., storage controllers, network acceleration)

-

Well-suited for small business servers, edge servers, or dedicated application servers

-

-

High-Performance Computing (HPC) and AI Accelerator Platforms

-

Use specialized socket configurations and high-bandwidth interconnects

-

Designed to support GPU computing, FPGA boards, or custom ASIC accelerators

-

Require the most advanced HDI PCB technologies to manage extreme I/O densities and power delivery challenges

-

Server Motherboards for Data Center Applications

In data center environments, server motherboards face unique constraints and requirements beyond basic functionality:

-

Thermal Management

-

Must align with rack-level airflow patterns and variable ambient conditions

-

Component placement and heat dissipation strategies are critical

-

-

Serviceability and Reliability

-

Require easy access for field-replaceable units (FRUs)

-

Must maintain structural integrity during shipping, installation, and maintenance cycles

-

Connector placement and cable routing optimized for standard rack configurations

-

-

Power Efficiency

-

Increasingly vital as operators seek to reduce operating costs and environmental impact

-

Server motherboard power delivery systems must achieve high conversion efficiency

-

Support for dynamic voltage and frequency scaling (DVFS) and other advanced power management features

-

Manufacturing Considerations and DFM Guidelines

DFM Guidelines for Server Motherboards

The transition from HDI PCB design to large-scale manufacturing of server motherboards requires careful attention to Design for Manufacturing (DFM) practices. Key considerations include:

-

Trace and space geometries

-

Typically 75/75 µm for outer layers

-

Must account for copper thickness variations and impedance control requirements

-

-

Drill-to-copper spacing

-

Maintain ≥ 200 µm clearance on inner layers

-

Reduces Conductive Anodic Filament (CAF) risk and improves drill registration tolerance

-

-

Via structure design

-

Aspect ratio limits and proper annular rings are critical for reliable plating

-

Ensures long-term mechanical and electrical integrity

-

-

Copper balance and symmetry

-

Large server motherboards are susceptible to panel warpage

-

Use thieving patterns and copper fill strategies to maintain uniform distribution without disturbing routing or power planes

-

Material Selection for HDI Server Motherboards

Choosing the right materials for HDI server motherboards requires balancing electrical performance, cost, and supply chain availability:

-

Dielectric systems

-

Low-loss materials for 28 Gbps+ interfaces (PCIe Gen5/6, CXL)

-

Optimized FR-4 may still be suitable for lower-speed layers

-

-

Resin family consistency

-

Using a single resin system across all dielectric layers simplifies impedance control and reduces manufacturing variability

-

-

Glass style and skew control

-

Spread-glass options improve electrical uniformity and minimize skew, essential for DDR5 memory channels

-

-

Thermal robustness

-

Materials must withstand multiple reflow cycles and extended operating temperatures

-

High-Tg, low-CTE laminates improve reliability under server motherboard thermal stresses

-

Supply Chain and Reliability Considerations

-

Supply chain management ensures stable sourcing of specialty laminates for HDI server motherboards.

-

Reliability testing (thermal cycling, impedance coupons, X-ray validation) validates consistency across high-volume production.

-

Long-term durability requires materials and processes that sustain 24/7 operation in enterprise and data center environments.

Haoyue Electronics: Advanced HDI Server Motherboard Manufacturing Capabilities

As the complexity of enterprise server motherboards continues to rise, selecting the right manufacturing partner is critical to achieving performance, reliability, and cost optimization. Haoyue Electronics specializes in HDI server motherboards manufacturing and advanced PCBA services tailored for enterprise and data center applications.

Comprehensive Manufacturing Capabilities

Our expertise covers the entire lifecycle of server motherboard production, including:

- Stack-up co-design services – translating conceptual designs into manufacturable HDI structures

- Material and dielectric selection – ensuring consistency, stability, and cost efficiency

- Impedance control engineering – coupon design, placement optimization, and first-article validation with full test data

Advanced HDI and VIPPO Implementation

Haoyue Electronics delivers manufacturing excellence with advanced processes such as:

- VIPPO (Via-In-Pad Plated Over) implementation with controlled fill processes

- Planarity control supporting fine-pitch BGA assemblies down to 0.3 mm

- X-ray inspection and void assessment protocols for consistent high-yield assembly across production volumes

Turnkey PCBA Services for Server Motherboards

We provide end-to-end PCBA solutions for the most demanding server motherboard programs, including:

- Stencil design optimization and reflow profile development

- Comprehensive functional and reliability testing

- Proven expertise in dual CPU server motherboards, enterprise storage boards, and high-speed networking designs

Partner with Haoyue Electronics

Whether your project involves enterprise server motherboards, GPU accelerator platforms, or custom storage solutions, Haoyue Electronics offers the advanced HDI PCB manufacturing and PCBA services needed to meet your requirements.

Frequently Asked Questions

What is a server motherboard and how does it work?

A server motherboard functions as the primary circuit board that interconnects all critical system components within a server platform. It provides electrical pathways for data communication between CPUs, memory modules, expansion cards, and peripheral devices while managing power distribution and system control functions. The motherboard incorporates specialized chipsets designed for server applications, offering enhanced reliability features, error correction capabilities, and support for enterprise-class components.

What are the key differences between server motherboards and desktop motherboards?

Server motherboards differ significantly from desktop motherboards in several critical aspects. They typically support multiple CPU sockets, enabling symmetric multiprocessing configurations that are uncommon in desktop applications. Memory capacity and bandwidth are substantially higher, with support for registered and load-reduced memory modules that provide greater density and reliability. Server motherboards also incorporate redundant power supplies, advanced management interfaces, and specialized expansion slots designed for enterprise storage and networking cards.

How do you choose the right server motherboard for data centers?

Selecting appropriate server motherboards for data centers requires careful evaluation of workload requirements, scalability needs, and operational constraints. Processing requirements should be matched to CPU socket configurations and memory bandwidth capabilities. I/O requirements must be assessed against available expansion slots and integrated interface options. Power efficiency, thermal characteristics, and serviceability features should be evaluated in the context of data center infrastructure and operational procedures.

What are the common challenges in server motherboard design and manufacturing?

Server motherboard design challenges encompass signal integrity management at high frequencies, power delivery system optimization, and thermal management across dense component layouts. Manufacturing challenges include HDI PCB fabrication with controlled impedance requirements, VIPPO implementation for fine-pitch components, and assembly processes that maintain reliability standards while achieving cost objectives. Supply chain management and component lifecycle planning represent additional challenges that must be addressed throughout the product development cycle.

What are the future trends in server motherboards with PCIe 5.0, DDR5, and CXL support?

Future server motherboard development is being driven by next-generation interface standards that demand increasingly sophisticated PCB design techniques. PCIe 5.0 implementation requires advanced signal integrity management and low-loss materials to support 32 GT/s data rates. DDR5 memory interfaces introduce new timing and power delivery requirements that influence motherboard architecture and component placement strategies. CXL protocol support enables coherent memory sharing and accelerator integration, creating opportunities for innovative server architectures while presenting new design challenges for signal routing and protocol implementation.